RAM is way faster than any disk (even an SSD). In-memory DBs are often used as a cache for disk databases. These can be the cases of large spikes in traffic on IMDB on the day of the Zack Snyder's Cut of Justice League release, Amazon a week before Christmas, or Uber on Friday night.

In-memory DBs are also used for high-speed data access, capable of 10,000 requests per second. For instance, Cloud Solutions uses Tarantool as the main DB to store metadata in their S3 compatible object repository. Today, in-memory DBs can already be used as the main storage in production. The node can be restarted without losing the information. In-memory DBs can store persist on disks. Although limiting the data amount, this greatly increases the speed. Its size is limited by the RAM capacity of the node. What is an in-memory database, or IMDB? This is a database that stores all the data in RAM. Redis and Tarantool are in-memory technologies. Introduction What Is an in-Memory Database, or IMDB? Programming languages for stored proceduresĬonnectors from other programming languagesġ.

LUA TABLE INSERT SORTED INMEMORY HASHMAP FREE

Data types, iterators, indexes, transactions, programming languages, replication, and connectors.įeel free to scroll down to the most interesting part or even the summary comparison table at the very bottom and the article. Then, we’ll delve into technical details. What about their efficiency, reliability, and scaling? When and how are they better than disk solutions? We’ll find out what is an in-memory database, or IMDB. My goal is to find meaningful similarities and differences, I am not going to claim that one is better than the other. At a first glance, they are quite alike - in-memory, NoSQL, key value. "Usage": "dup.In this article, I am going to look at Redis versus Tarantool.

LUA TABLE INSERT SORTED INMEMORY HASHMAP CODE

"Title": "dup.lua - in our code repository.", "SummaryLine": "Is message a duplicate?", "Desc": "True if the message is detected as a duplicate" "Desc": "Checks to see if a message is a duplicate based off in memory buffer of MD5 hashes it gets from the log", Check if current message is in lookup table Iguana.logInfo('dup.lua: Finished Initial fetch/store of ' Iguana.logInfo('dup.lua: Storing Initial Messages!') Local messages = fetchMessages(LookbackAmount) Iguana.logInfo('dup.lua: Fetching Initial Messages!') Messages are retrived from API in a table sorted newest -> oldest. Populate in memory structures with lookbackAmount # of messages. Local LookbackAmount = Params.lookback_amount Will force queue to always be empty so fetch/store annotations Uncomment line below when testing annotations. Local CurrentTimestamp = os.ts.date("%Y/%m/%d %X", CurrentTime) Remove oldest message so queue size equals lookback amount. Local function refreshStoredMessages(LookbackAmount) Local port = iguana.webInfo().web_config.port

Local protocol = iguana.webInfo().web_e_https and 'https' or 'http' Local function fetchMessages(LookbackAmount) LoggedMessageStamp = X.export:child("message", i).time_stamp:nodeValue()ĭup.lookup = LoggedMessageStampĭup.queue:enqueue(createMsgNode(LoggedMessageHash)) LoggedMessageHash = util.md5(LoggedMessage) LoggedMessage = X.export:child("message", i).data:nodeValue() Local numMessages = X.export:childCount('message')

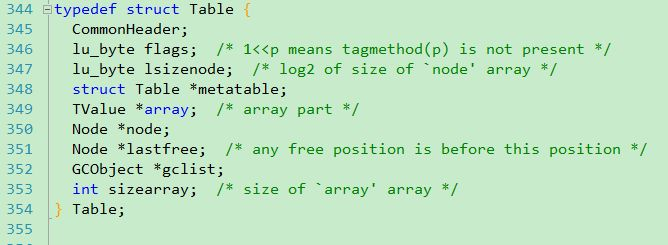

Local LoggedMessage, LoggedMessageHash, LoggedMessageStamp = nil, nil, nil If self.size > 0 then return self.head end Retrieve node from front of queue without removing If self.size = 0 then self.tail = nil end Remove node from front of queue and return it messages themselves limits the amount of memory overhead. Since the whole thing operates in memory after startup it should be fast and the use of MD5 hashes rather than storing the raw are intended to be efficient - lookups done using a hashmap and maintaining the list of messages to expire using a linked list. After that it maintains a linked list of MD5 hashes of messages in memory. When a channel first starts or you edit this script this module will query the history of